“Real people don’t really win those contests.”

I know that’s the way I feel about those 5 MILLION DOLLARS JUST WAITING FOR YOU IN OUR CUSTOMER SWEEPSTAKES contests that sucker you in. C’mon, those Publishers Clearinghouse videos of Ed McMahon carrying a giant check weren’t real — they were all filmed on a Hollywood set with actors!

(If your Uncle Joe or your Aunt Martha actually were winners, you can let me know I am wrong in the comments, but I don’t think I am and I don’t think you will)

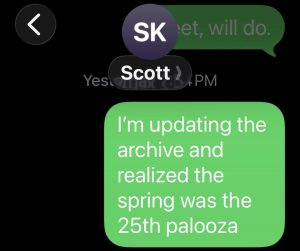

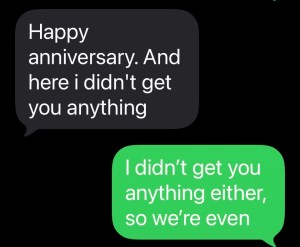

When Derek and I launched our first CMEpalooza events with real prizes, we wanted to make sure they went to real people, and better yet, real people who work in CME (or at least are tangentially affiliated with CME). So here is the list of our most recent prize winners.

CMEpalooza STEPtacular Challenge

(Sponsored by Talem Health)

$50 Amazon gift card winners

Amanda Dinsmore, CSM — Business and Project Quality Associate, CE Outcomes

Dana Zook — Associate Director, Program Development and Outcomes, American Society of Health-System Pharmacists

Danielle Harris — Professional Development Administrator, American Society of Clinical Oncology

Emma Shillman — Director of Clinician Engagement, Clinical Connection, LLC

Zach Hall — Client Services Manager, Pri-Med

$250 Amazon gift card winner (most steps in 1 day)

Joanne Wise — Manager Continuing Education, The Center for Public Health Continuing Education SUNY Albany School of Public Health

Joanne stepped 63,368 times in one day. That’s 2,640 steps per hour. Not too shabby.

CMEpalooza Scavenger Hunt

(Sponsored by Academy for Continued Healthcare Learning)

$50 Amazon gift card winners

Rhea Alexis Banks — Admin Assistant, Northwestern University, Feinberg School of Medicine, Office of Continuing Medical Education

Emily Gagnon, PMP — Education Technology Manager at American Society of Cataract and Refractive Surgery

Wendy Macias — Continuing Medical Education Manager, Methodist Healthcare System

Andrea Funk, CHCP — General Manager, Global Education Group

Laura Dalu — Senior Marketing Manager, Vindico Medical Education

Angela Still — Senior Grant Specialist, Vindico Medical Education

Daniel DaSilva — Accreditation and Outcomes Coordinator, Med Learning Group

Christina Billings, MPH, CHCP

Courtney Green — Senior Manager, Education, Canadian Association of Radiologists

Michelle Adams — Accreditation and Joint Providership Manager, American Society of Anesthesiologists

Just for fun, we asked all scavenger hunters to tell us their favorite word in the English language. The winner, oddly enough, was “pivot.” “Love,” “kerfuffle,” “serendipity,” and “copacetic” were also popular choices. I personally enjoyed “pluviophile,” “dingus,” and “cattywampus.”

Feel free to use any or all of these words in your professional conversations today.